AI-Native Kanban

AI-native kanban for developers

Sprint planning was designed for human-paced delivery. When AI agents can ship a feature in hours that used to take a week, two-week cadences become a bottleneck — not a discipline.

Why sprint-based tools don't fit AI-augmented workflows

Sprint planning assumes a relatively stable throughput rate. You estimate tickets, commit to a scope, and track velocity over time to predict future capacity. The whole system is calibrated to human delivery speed — a developer can write a certain number of lines per day, and that number is roughly predictable.

Add AI agents to the delivery pipeline and that assumption breaks. A Claude Code session can implement a well-specified feature in an afternoon that would have taken three days to write manually. Velocity as a metric loses meaning when throughput is variable by an order of magnitude depending on task type and AI involvement. A sprint committed at human speed looks embarrassingly empty when half the tickets close in the first two days.

Worse, sprint ceremonies — planning, grooming, retrospectives — are designed for a batch model of work. You collect work, estimate it, and release it on a cadence. But AI-augmented teams often work in a more continuous flow: a brief arrives, Claude implements it, a human reviews, it ships. The time between brief and deployment can be hours. A two-week sprint is an awkward wrapper around a process that doesn't need it.

Flow vs. sprint: when cadence-based planning breaks

Kanban is fundamentally a flow model. Work enters a queue, moves through stages, and exits when done. The unit of measurement is not velocity — it's cycle time: how long does a card take to go from "started" to "done"? And the primary lever is WIP limits: how many things can be actively in progress at any given time?

This model fits AI-augmented delivery much better. When Claude Code can implement a card in a few hours, the bottleneck often shifts to the human review step. WIP limits on the review column force that bottleneck to be visible — you can't add more work until the review queue clears. Cycle time tells you whether your review process is keeping up with AI throughput, or whether it's becoming the constraint.

Sprint tools can't show you this because they're not tracking flow — they're tracking commitment vs. delivery within a fixed window. If everything ships in the first week, the sprint still runs for two weeks. The signal that should prompt a process change — "our review stage is 3x slower than our implementation stage" — is invisible in a sprint model.

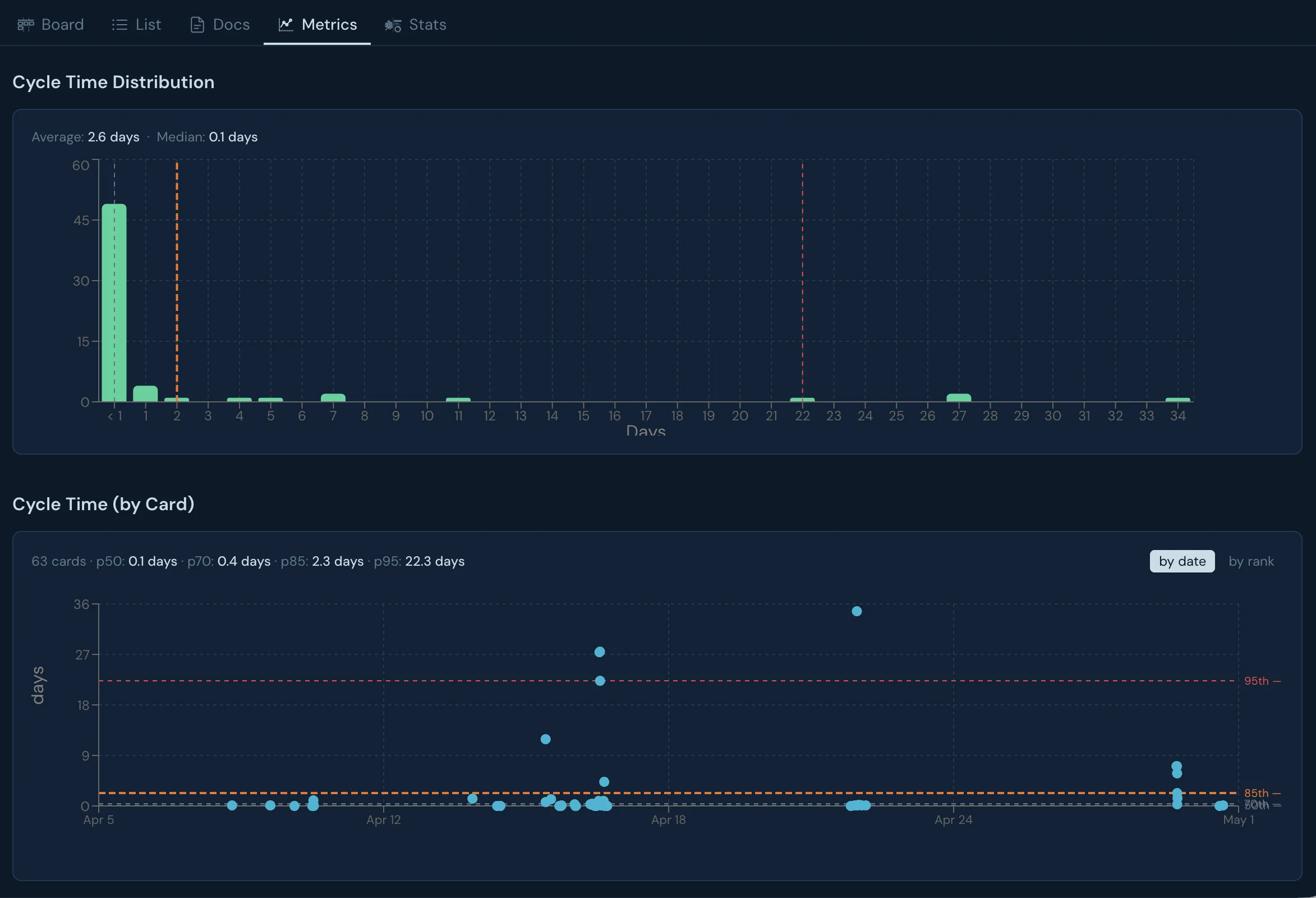

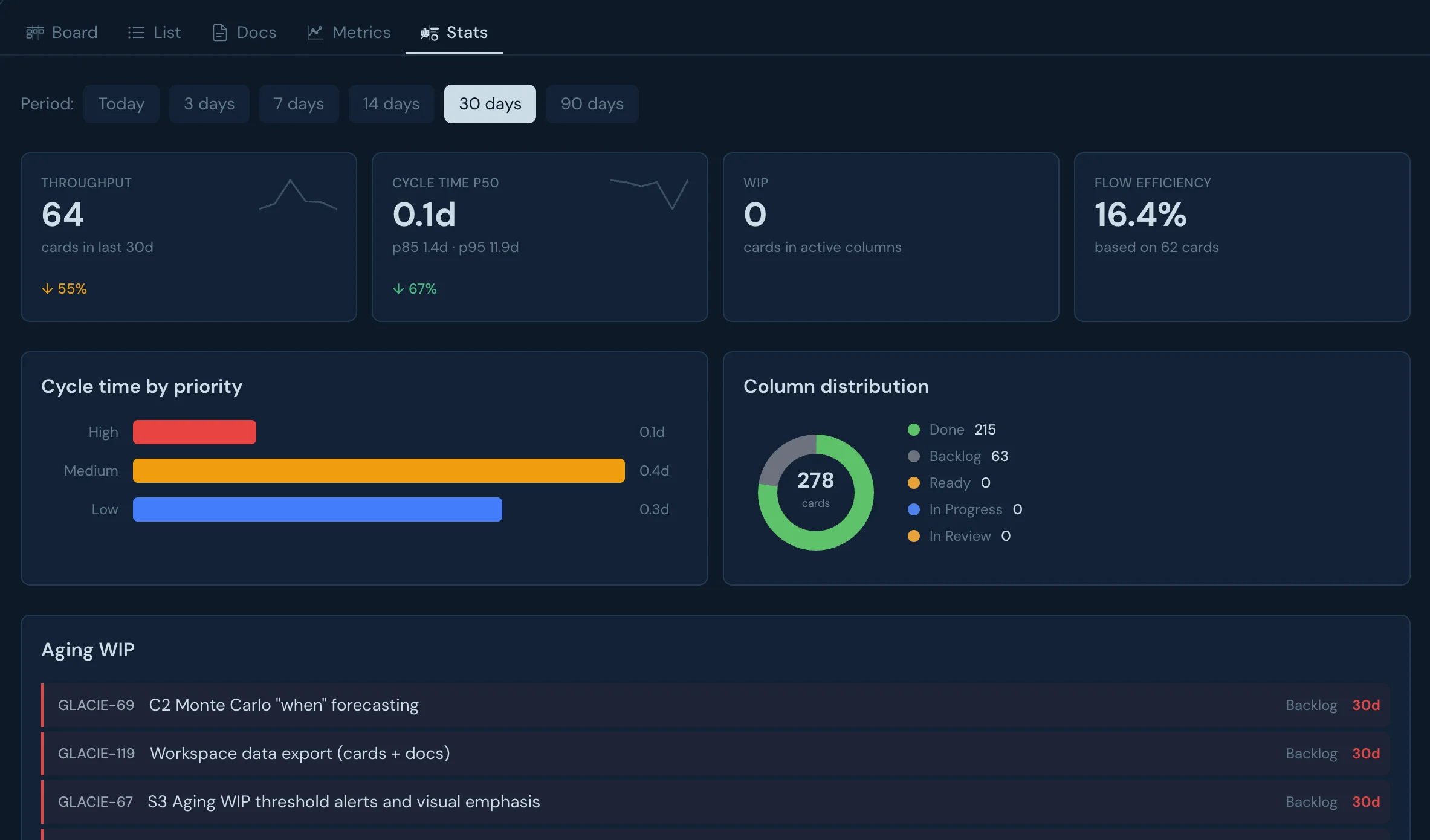

WIP limits and cycle time: the metrics that matter

WIP limits

Cap how many cards can be active in a column simultaneously. Forces bottlenecks to surface. When a column is full, new work can't enter until existing work exits — making constraints visible and creating pull-based flow.

Cycle time

Time from when a card enters "active" to when it reaches "done". In AI-augmented teams, short implementation cycle times paired with long review cycle times signal a process bottleneck, not a capacity problem.

These two metrics, together, tell you more about your team's health than velocity ever did. A team with short cycle times and respected WIP limits is a team that ships predictably. A team with high WIP and long cycle times is a team that's hiding its bottlenecks behind busyness.

Glacier tracks both. Each column can have a WIP limit that turns red when breached. Cycle time is computed per card and per column type. You can see at a glance where work is getting stuck — and so can the AI agents connected to your board via MCP.

How Glacier combines kanban methodology with AI-native architecture

Glacier is a kanban board built on the assumption that AI agents are first-class participants in delivery. That shapes several design decisions:

- →

Cards link to structured docs. When Claude Code implements a feature, it can read the full brief — not just a ticket title. The doc is a first-class object with its own ID, fetchable via MCP.

- →

MCP server is core, not an add-on.The same MCP server Claude Code uses to read and update the board is the same one you'd use to build custom automations. There's no separate "developer API" — the AI interface is the interface.

- →

Column types encode flow stages.Queue, active, waiting, and done column types let the system understand where work is in the flow — not just which column it's in. This enables smarter WIP limit enforcement and cycle time tracking.

- →

Work hierarchy supports AI decomposition. An AI agent can create a parent card from a brief, break it into child cards, and update subtask completion as implementation progresses — all through the same MCP tools.

Read more in the kanban and flow metrics guide or explore the full documentation.

Request early access

Glacier is in private beta. Join the waitlist and we'll get you set up.